Well, there should have been an optimistic post about my vulnerability analysis & classification pet-project. Something like “blah-blah-blah the situation is pretty bad, tons of vulnerabilities and it’s not clear which of them can be used by attackers. BUT there is a way how to make it better using trivial automation“. And so on. It seems that it won’t be any time soon. ¯\_(ツ)_/¯

I’ve spent several weekends on making some code that takes vulnerability description and other related formalized data to “separate the wheat from the chaff”. And what I get doesn’t look like some universal solution at all.

Pretty frustrating, but still an interesting experience and great protection from being charmed by trendy and shiny “predictive prioritization”.

Literally, when you start analyzing this vulnerability-related stuff every your assumption becomes wrong:

- that vulnerability description is good enough to get an idea how the vulnerability can be exploited (let’s discuss it in this post);

- that CVSS characterizes the vulnerability somehow;

- that the links to related objects (read: exploits) can be actually used for prioritization.

Actually, there is no reliable data that can be analyzed, trash is everywhere and everybody lies 😉

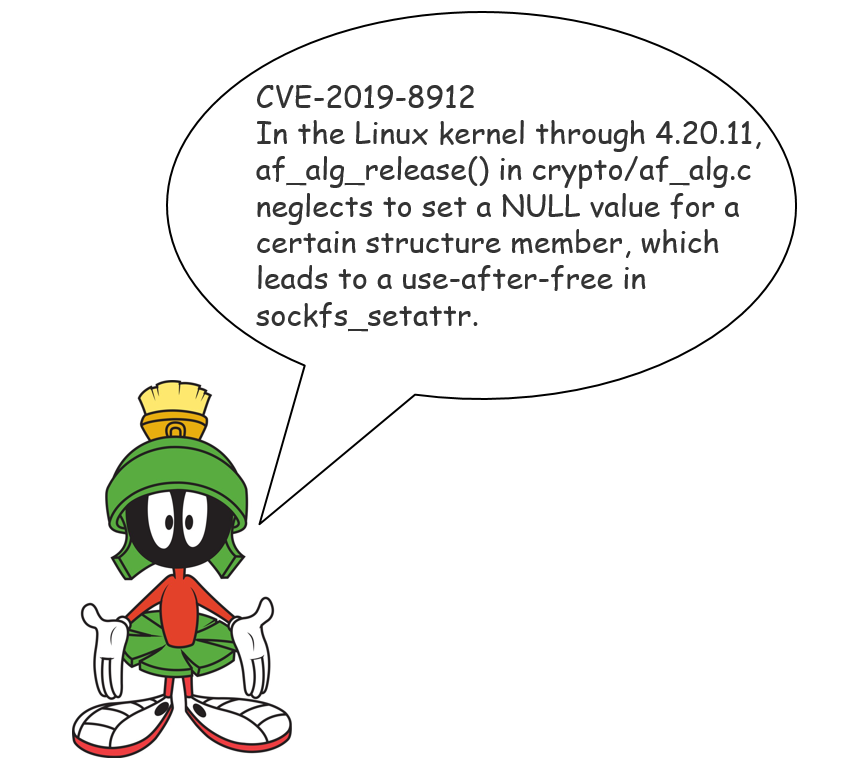

Let’s start from the vulnerability description. Great example is the last week critical Linux kernel vulnerability CVE-2019-8912.

Continue reading