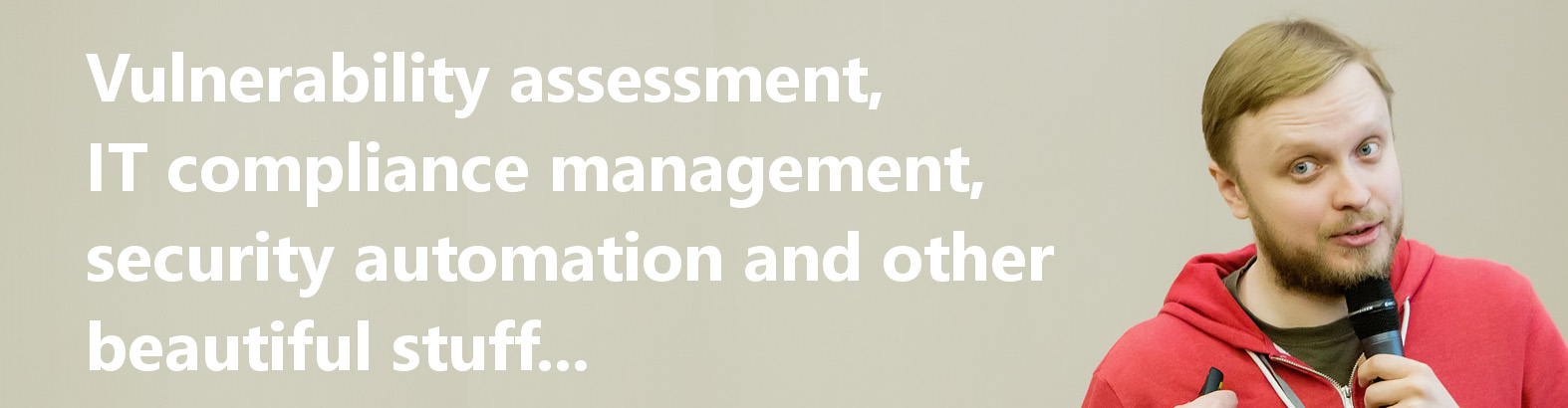

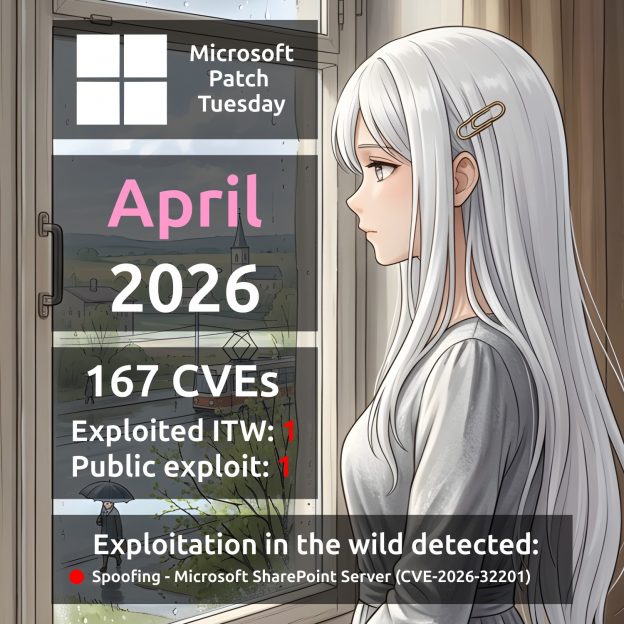

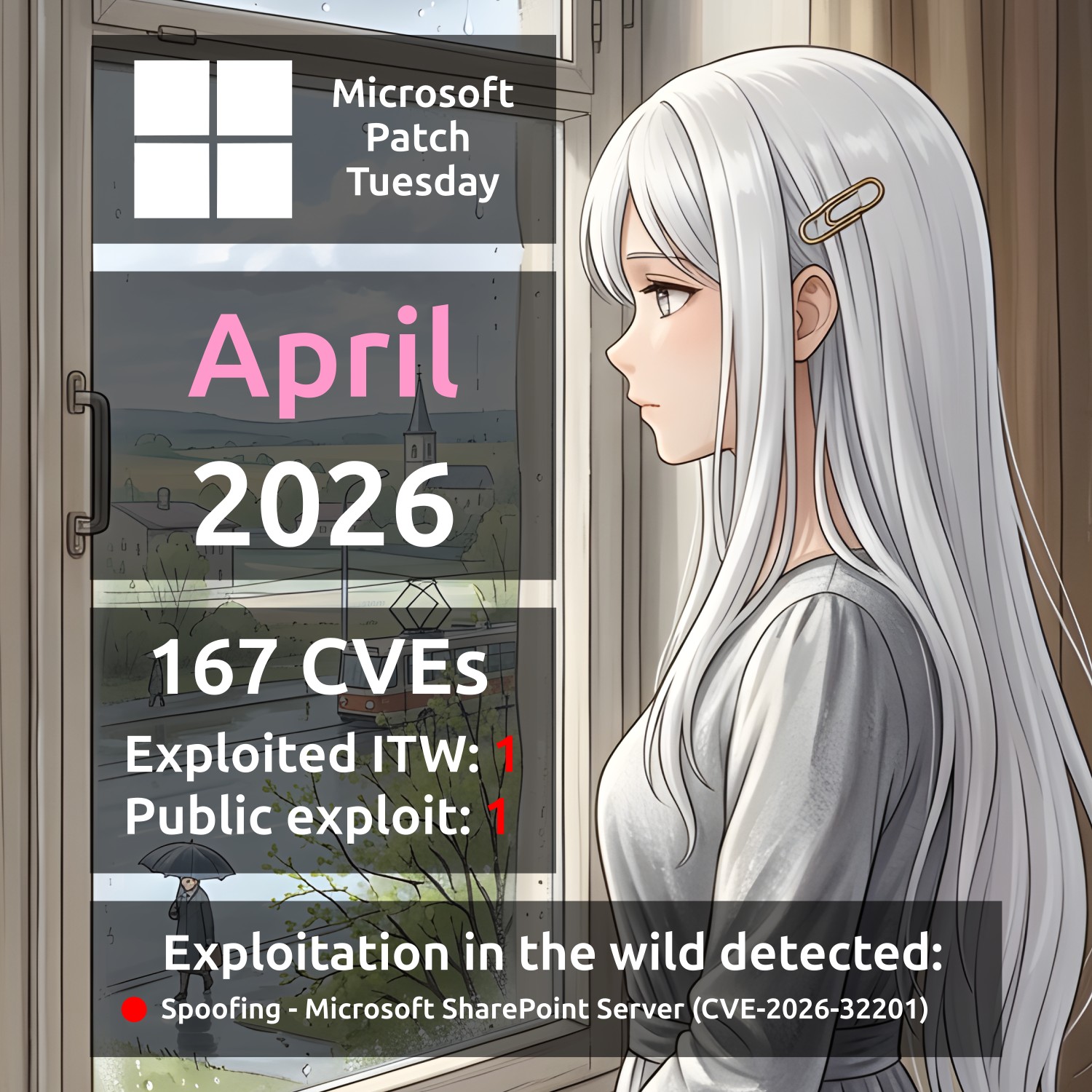

April Microsoft Patch Tuesday. A total of 167 vulnerabilities, about twice as many as in March. There is one vulnerability already being exploited in the wild:

🔻 Spoofing – Microsoft SharePoint Server (CVE-2026-32201). ZDI experts say “Spoofing bugs in SharePoint often manifest as cross-site scripting (XSS) bugs”. “An attacker who successfully exploited the vulnerability could view some sensitive information (Confidentiality), make changes to disclosed information (Integrity), but cannot limit access to the resource (Availability)”. There is no info yet about how widely it is being used in attacks, but you should not delay patching, especially if SharePoint is exposed to the Internet.

Formally, there are no public exploits yet. However, there are strong indications that a public exploit may already exist for one vulnerability.

🔸 EoP – Microsoft Defender (CVE-2026-33825). “Insufficient granularity of access control” in Microsoft Defender allows a logged-in attacker to gain higher privileges on a local system. Tenable and ZDI say the bug looks similar to the BlueHammer zero-day, for which a public exploit was released on GitHub on April 3. The researcher who published it, Chaotic Eclipse, criticized Microsoft’s disclosure process. ZDI says the exploit is real, but exploitation is unstable and not always reliable.

Other important issues:

🔹 RCE – Windows Active Directory (CVE-2026-33826). To exploit this, the attacker must have an account. The attacker sends a specially crafted RPC request to a vulnerable server, which can lead to code execution. Microsoft says the attacker must be in the same restricted Active Directory domain as the target system.

🔹 RCE – Windows Internet Key Exchange (IKE) Service Extensions (CVE-2026-33824). ZDI says this vulnerability is wormable, meaning it could allow malware to spread automatically between systems. It affects systems with IKE enabled, which creates a large attack surface. Microsoft recommends blocking UDP ports 500 and 4500 at the network edge. However, attackers inside the network can still use it for lateral movement. Patch quickly if you use IKE.

🔹 RCE – Windows TCP/IP (CVE-2026-33827). ZDI also says this may be wormable, especially on systems using IPv6 and IPSec. A race condition makes it harder to exploit, but similar bugs are often exploited at Pwn2Own, so you should not rely on that difficulty. If you use IPv6, test and deploy the patch quickly before exploits appear.

🔹 EoP – Windows Push Notifications (CVE-2026-26167). This Patch Tuesday includes several sandbox escape vulnerabilities, including in Push Notifications, AFD for Winsock, Windows Management Services, and User Interface Core. CVE-2026-26167 (Push Notifications) is the most important because it is the only one with low attack complexity. The others require winning a race condition (AC:H).

🔹 Spoofing – Remote Desktop (CVE-2026-26151). Weak warnings in the Remote Desktop interface allow a network attacker to trick a user into opening a specially crafted file, leading to spoofing. The issue was found by the UK National Cyber Security Centre (NCSC).