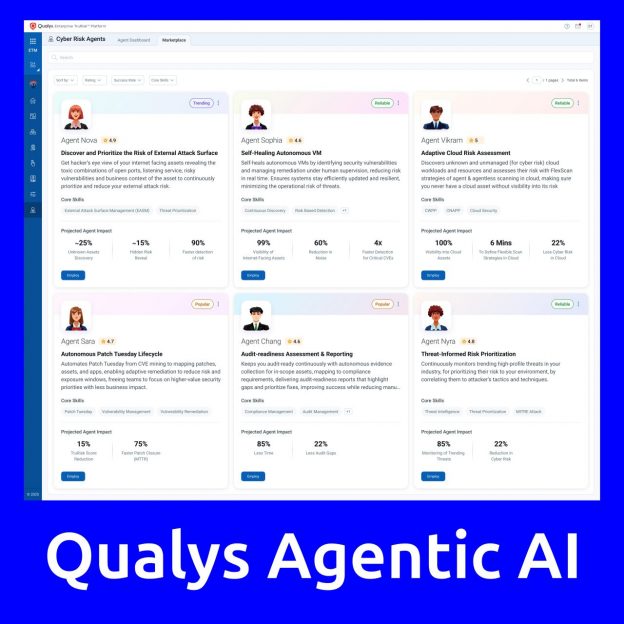

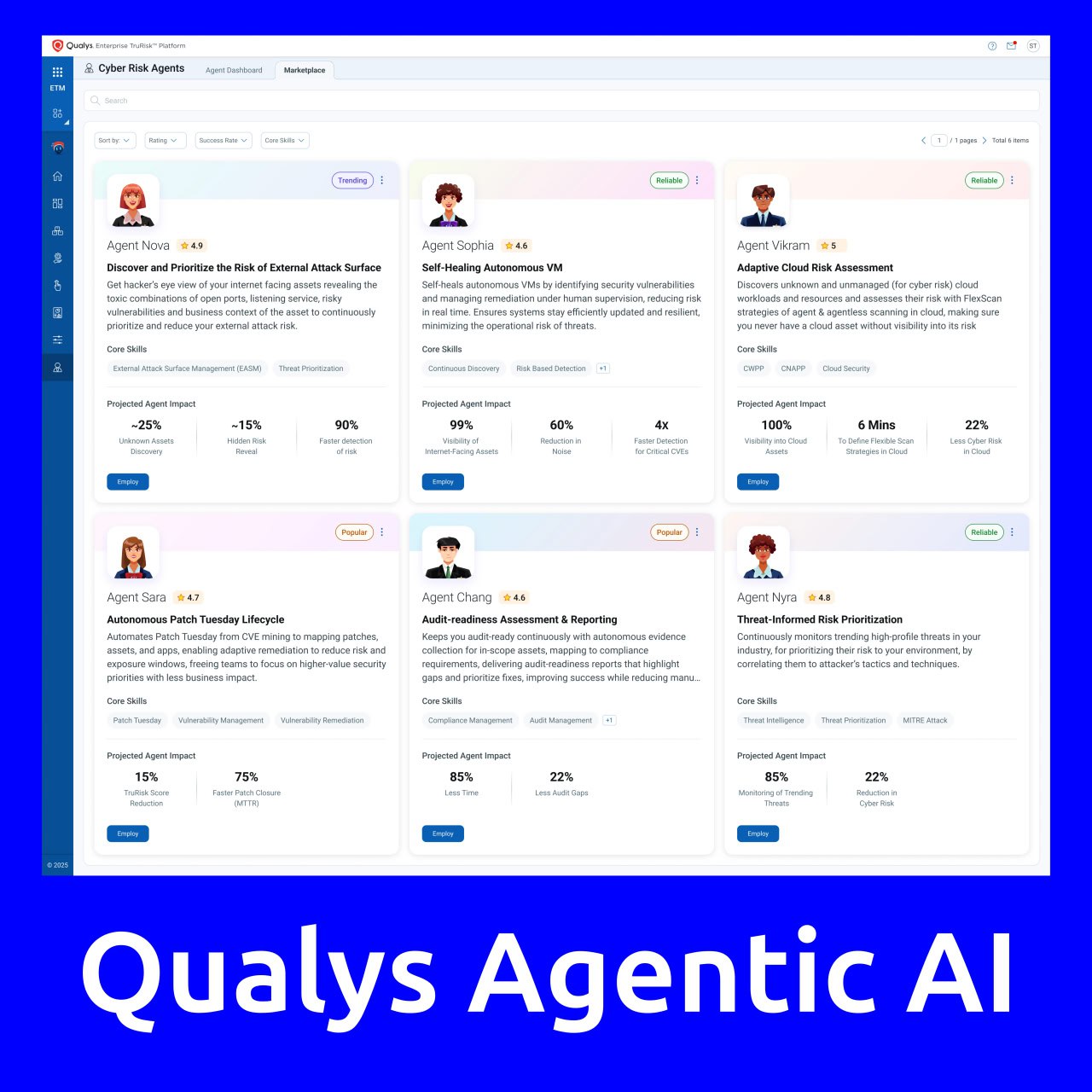

Qualys has introduced Agentic AI, a solution for autonomous cyber risk management. As part of this solution, Qualys provides ready-to-use Cyber Risk Agents that operate autonomously and act as an additional skilled digital workforce. Agentic AI not only detects issues and provides analytics but also autonomously identifies critical risks, prioritizes them, and launches targeted remediation workflows.

Available agents on the marketplace:

🔹 Identification and prioritization of risks related to external attacks

🔹 Adaptive cloud risk assessment

🔹 Audit readiness evaluation and reporting

🔹 Threat-based risk prioritization

🔹 Autonomous “Microsoft Patch Tuesday” cycle

🔹 Self-Healing agent for vulnerability management

They also introduced the Cyber Risk Assistant – a guided interface that transforms risk data into context-aware actions with autonomous execution.