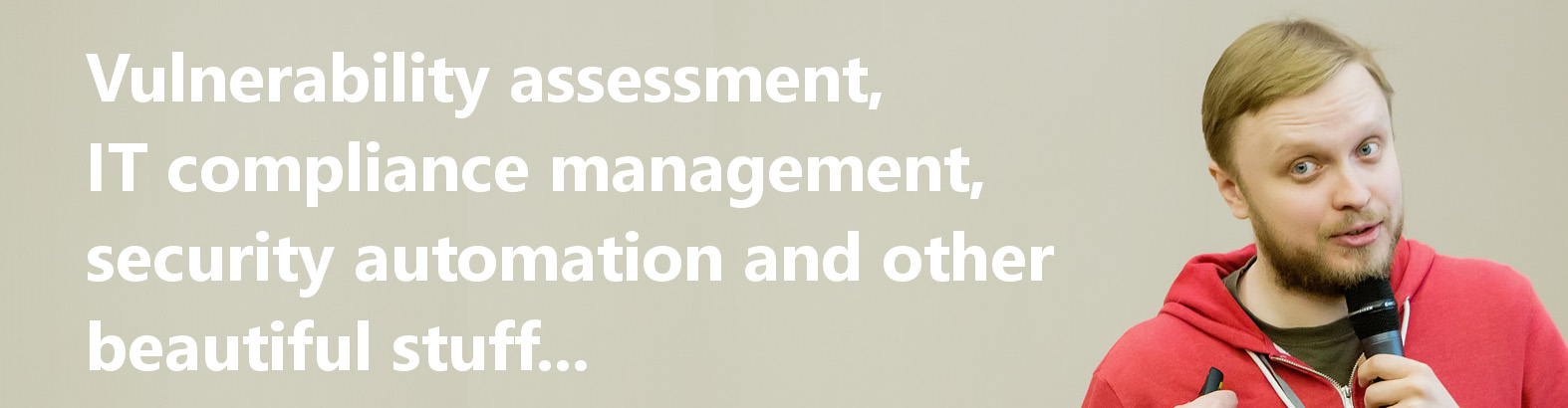

March Linux Patch Wednesday. In March, Linux vendors began addressing 575 vulnerabilities, which is 57 fewer than in February. Of these, 93 are in the Linux Kernel (⬇️ a significant decrease – there were 305 in February). There are two vulnerabilities with signs of in-the-wild exploitation:

🔻 RCE – Chromium (CVE-2026-3909, CVE-2026-3910)

Additionally, for 130 (❗️) vulnerabilities, public exploits are available or there are indications of their existence. Notable ones include:

🔸 RCE – Caddy (CVE-2026-27590), NLTK (CVE-2025-14009), Rollup (CVE-2026-27606), GVfs (CVE-2026-28296), SPIP (CVE-2026-27475), OpenStack Vitrage (CVE-2026-28370)

🔸 AuthBypass – Curl (CVE-2026-3783), coTURN (CVE-2026-27624), Libsoup (CVE-2026-3099)

🔸 InfDisc – Glances (CVE-2026-30928, CVE-2026-32596)

🔸 PathTrav – gSOAP (CVE-2019-25355), basic-ftp (CVE-2026-27699)

🔸 EoP – Snapd (CVE-2026-3888), GNU Inetutils (CVE-2026-28372)

🔸 SFB – Caddy (CVE-2026-27585, CVE-2026-27587/88/89), Keycloak (CVE-2026-1529), PyJWT (CVE-2026-32597), Authlib (CVE-2026-27962, CVE-2026-28498, CVE-2026-28802)

🔸 CodeInj – lxml_html_clean (CVE-2026-28350), ormar (CVE-2026-26198)

🔸 SSRF – Libsoup (CVE-2026-3632)