On May 21, I spoke at the PHDays 9 conference. I talked about new methods of Vulnerability Prioritization in the products of Vulnerability Management vendors.

During my 15 minutes time slot I defined the problems that this new technology has to solve, showed why these problems could NOT be solved using existing frameworks (CVSS), described what we currently have on the market and, as usual, criticized VM vendors and theirs solutions a little bit. ?

Here is the video of my presentation in Russian:

And this one with simultaneous translation into English. Unfortunately, I didn’t know that there would be any translation, so I spoke quickly (natural way for fast track) and used specific slang. That’s hardened the task of the translator significantly. That’s why I can’t really advice to watch this translated video, it might be better to read the full write-up bellow. By the way, I was posting parts of the write-up in my Telegram channel avleonovcom in real time. Currently Telegram is the main blogging platform for me, so I invite you to follow me there. 😉

Presentation slides:

Truly revolutionary year for VM

I think that 2019 is the best and truly revolutionary year for the whole Vulnerability Management industry, since top VM vendors (well, at least 2 of them) finally publicly recognized the problem with Vulnerability Prioritization and began to offer some solutions.

The problem is that most of vulnerabilities that can be detected by a Vulnerability Scanner are actually unexploitable and worthless for an attacker. And it’s hard to say which of them exactly. These can be vulnerabilities labeled as “Critical”, “High” level or with “Exploit exists”.

And you still have to fix such unexploitable vulnerabilities and face negative reactions from IT because of unnecessarily remediation efforts, down time, and “The Boy Who Cried Wolf” effect.

Certainly, it’s not a secret for those who have ever launched a vulnerability scan, but this state of the things was here for decades (Tenable/Nessus, Qualys, Rapid7 are more than 20 years old!). “We give you information about vulnerabilities that we received from software vendors as is, and it’s up to you how to make this data actionable”. This always drove me crazy, and I am glad that finally some vendors started to talk publicly that it’s not ok (of course, with their own marketing reasons).

Invisible work of keeping Vulnerability Databases up to date

It may seem that I criticize Vulnerability Management vendors, because they don’t validate exploitability of the vulnerabilities. Not really. I know that they have many other important things to do. Especially the teams responsible for keeping Vulnerability Databases up to date.

At nearly every VM vendor’s website you will find news about vulnerabilities, that were discovered by their vulnerability researchers. From marketing point of view such activity seems valuable. Vulnerability researchers promote vendor at security events and spread the message: if the researchers are competent, then Vulnerability Management product should be good as well.

But in reality Vulnerability Research has nearly nothing to do with main functionality of Vulnerability Scanners. How many vulnerabilities in software products can a good research team discover in several years? In best case maybe hundreds. For a relatively good Vulnerability Management solution it will be necessary to make vulnerability detection rules (plugins) for more than 100000 existing vulnerabilities! Making a good Vulnerability Management solution is not about the research of new individual vulnerabilities, it’s about effective aggregation and processing of poorly structured external data.

It’s nearly invisible work. You will never see a press release of VM vendor, that now their scanner supports vulnerability detection for SuperDuperERP. Even if the Security Content Developers spent lots of time and efforts to understand everything about the versions, security bulletins, types of patches and plugins for this particular piece of software. And there are hundreds and thousands of such systems (Windows, Linux and Unix derivatives, network and telecom devices, ERP, databases, etc.) and the amount is constantly growing. Everything is at move: logic of patch management can be (and will be) changed by the software vendor at any time. And the customers will notice your work only in case you fail. Otherwise it supposed that Vulnerability Scanner will magically detect every vulnerability for every system.

That’s why providing even the current, far from ideal, level of vulnerability detection, based on vulnerability data received from software vendor and presented as is, requires insane amount of unpleasant work nearly invisible from the outside. And manual verification of each vulnerability will require even more. No VM vendor will have resources for this.

The only possible solution is to aggregate some more data about vulnerabilities from external feeds and to use it for better vulnerability classification.

What’s wrong with CVSS?

Why we need something better for Vulnerability Prioritization? There are several reasons:

- My favorite reason is that CVSS is subjective. Two people with the same information about vulnerability will most likely get different CVSS scores. When choosing the right impact you should not only know the detailed description for each option, but also keep in mind the examples from the official manual, to know how it should be interpreted in different contexts.

- In December 2018, authors from Carnegie Mellon University published a great report “Towards improving CVSS“. The paper is written in an academic style, but it’s very straightforward. They call CVSS “inadequate” and CVSS v3.0 formula “not robust or justified”. If you use CVSS often, I highly recommend you to read it. It’s only 8 pages long. They list examples of CVSS failures in groups:

- Failure to account for context (both technical and human-organizational). “CVSS does not handle the relationship(s) between vulnerabilities”, “data loss [can be] more critical than the loss of control of the device”.

- Failure to account for material consequences of vulnerability (whether life or property is threatened). “If a vulnerability will plausibly harm humans or physical property, then it is severe, but CVSS as it is does not account for this”.

- Operational scoring problems (inconsistent or clumped scores, algorithm design quibbles). “CVSS v3.0 concepts are understood with wide variability, even by experienced security professionals”, “more than half of survey respondents are not consistently within an accuracy range of four CVSS points.”

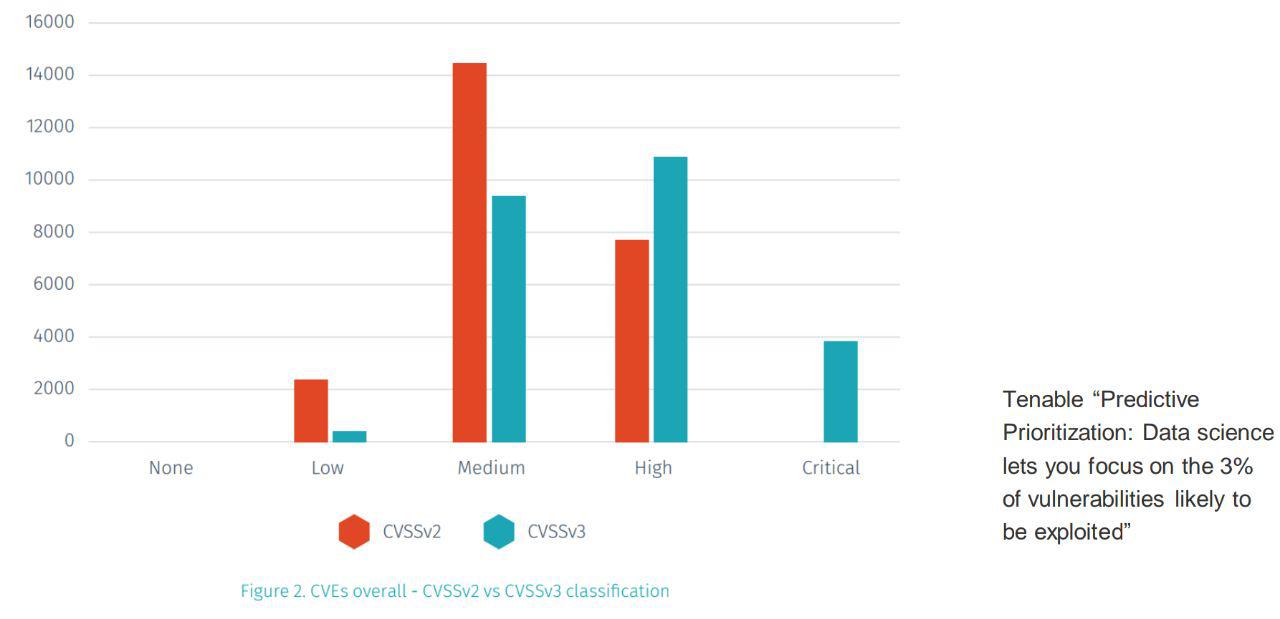

- This one I took from Tenable’s “Predictive Prioritization: Data science lets you focus on the 3% of vulnerabilities likely to be exploited”. In real life CVSS produces too many critical vulnerabilities. For example, in 2018 about 16500 vulnerabilities were disclosed in NVD: 61% of them have CVSS v.2 Base Score value more than 7 (“High” and “Critical” severity) and 15% more than 9 (“Critical” severity). It’s nearly impossible to use it for prioritization, because when everything is critical nothing is critical.

The change from CVSS v.2 to 3 made it only worse, because it produced even more Medium/High/Critical vulnerabilities.

CVSS + Exploit?

Well, if using only CVSS for Vulnerability Prioritization is bad, maybe we can use it with Exploit databases? Actually, it’s a good idea. Only 7% of vulnerabilities have a publically available exploit, so if a vulnerability has an exploit it worth to patch it. Of course, if the exploit actually exists and works well. Because sometimes “exploit” is just a PoC or a detection script, sometimes the type of vulnerability and the type of related exploit don’t match (RCE and DoS for example), sometimes you just have to believe that exploit exists somewhere in a closed pack, but no one has seen or used it. So, if we have a good exploit data feed, such prioritization method will be quite useful.

But, this way will give maximum priority to vulnerabilities that are exploitable right NOW. And VM vendors promise us that their new Vulnerability Prioritization features will also get vulnerabilities that are not exploitable now, but are likely to be exploited in the near-term FUTURE! Which is interesting.

How this Vulnerability Prioritization, works?

Strictly speaking, everything we know is from marketing materials and all technical details are hidden in the VM vendor’s cloud. So, it could easily be some kind of magical oracle. ?

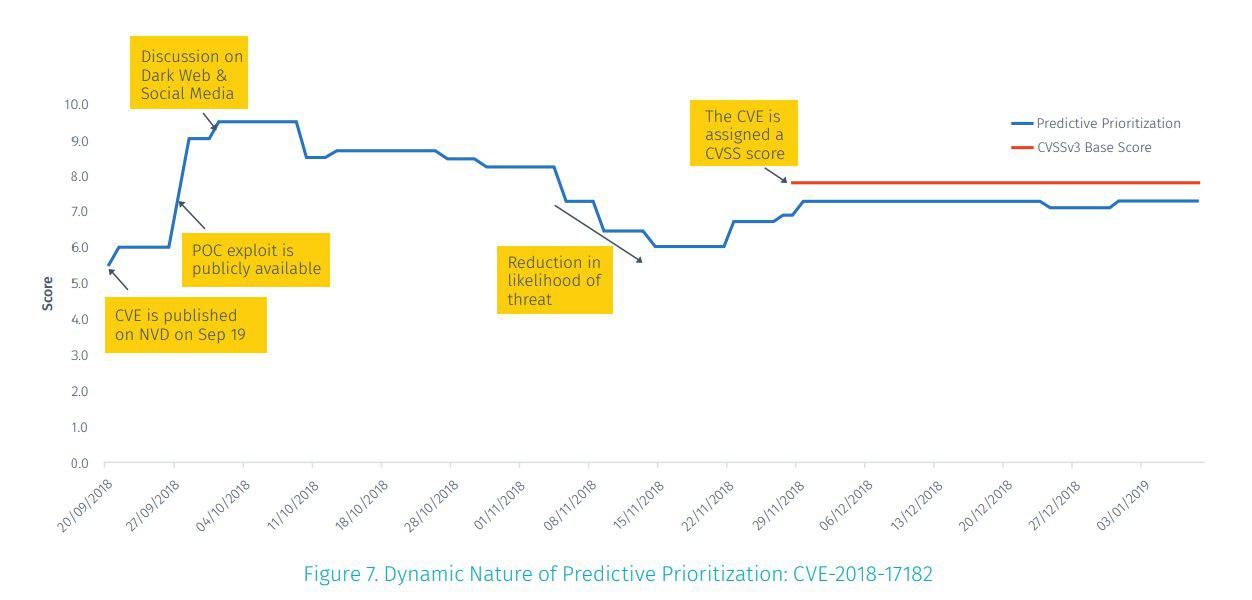

Whitepapers state that VM vendors use external feeds of vulnerability-related data and some AI tool (neural networks) to constantly recalculate some Score for each vulnerability. This Score represents probability that vulnerability will become exploitable in near future.

What kind of data they use?

Actually, it’s not clear and hardly depends on specific vendor. So, now let’s now talk directly about Tenable and Predictive Prioritization. Tenable analyzes about 150 different aspects of vulnerability. Some of these aspects are kept secret. Those, that are publicly known are:

- CVSS (Base, Exploitability, Impact scores)

- NVD (Descriptions, CWE, dates, vendors)

- Threat Intelligence, such as “Recorded Future” (attacks and exploit dates, popularity in social media and darkweb)

- Exploit Databases (entries and dates)

As a result, they get 3% of the most critical vulnerabilities and recommend fixing them first. You can even decide to fix only these vulnerabilities, reducing the burden on your colleagues from IT, so they will hate you a little less. ?

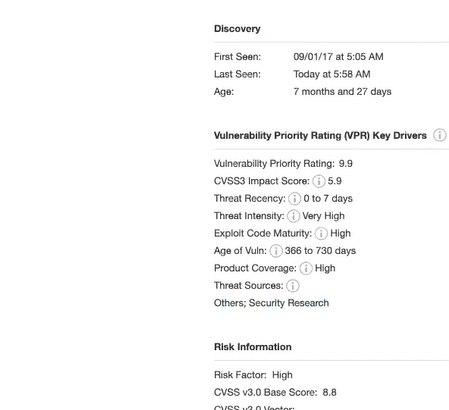

The feature is available in TenableIO and TenableSC. Prioritization scores (Vulnerability Priority Rating, VPR) are updated daily for all CVEs. Vulnerabilities without CVE are currently out of scope. If some detection plugin has multiple CVEs, this plugin itself will have the the biggest VPR for all it’s CVEs. Assigning VPR to the detection plugin is, IMHO, pretty ugly, but it’s necessary until Tenable will get rid of monstrous patch-based detection plugins.

Tenable produces VPR even before the vulnerability gets CVSS in NVD and changes it when some new information about the vulnerability appears.

And it’s not only a single magic number. Tenable also provides several subscores, that you can also use for prioritization.

They call it “Key drivers”:

- CVSSv3 impact score

- threat recency

- threat intensity

- exploit code maturity

- age of the vulnerability

- product coverage

- threat sources

Can we make such Vulnerability Prioritization on our own?

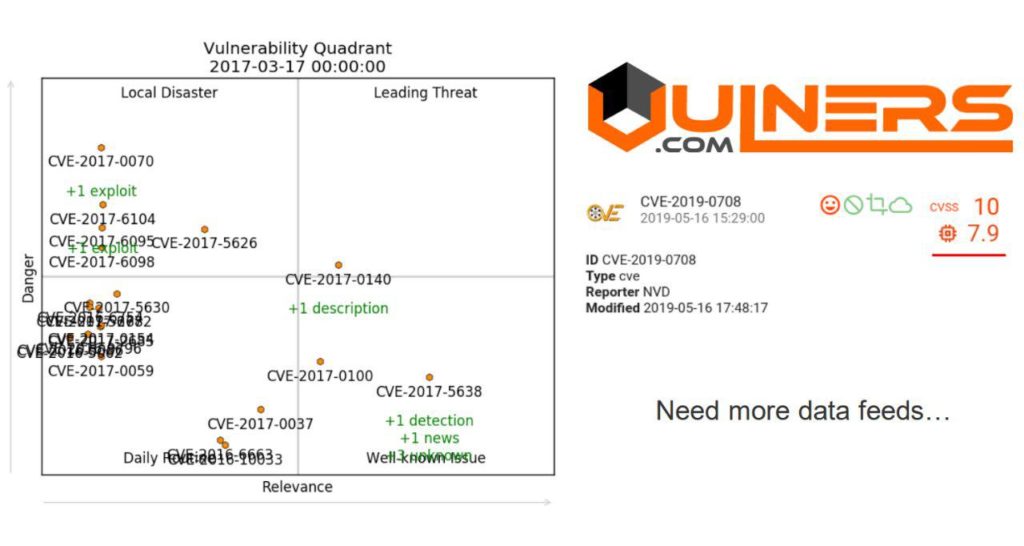

The idea of using vulnerability related data feeds and machine learning for better vulnerability prioritization is not new. For example, 2 years ago, I demonstrated Vulnerability Quadrants.

Vulnerability Quadrants used crosslinks of Vulners security objects (and their history) to asses danger and media relevance of the vulnerabilities. Vulners also counts some CVSS-like prioritization score by analyzing vulnerability description with neural networks. So, the main components are already here and the topic is quite trendy. But such functionality critically depends on security data feeds, their limitation and quality. Exploit, malware and attack data carefully mapped to the vulnerabilities is the most valuable. Here the major Vulnerability Management vendors have their natural advantage, because they simply can purchase all necessary feeds on the market and hire better data scientists to process it effectively (there are currently about 7 people in the Tenable datascience team; ~1400 employees in the company) . They also already know how to make profit from this feature.

But with sufficient funding it’s repeatable and doable. ?

In conclusion

I think that it’s really awesome that VM vendors working on better Vulnerability Prioritization. But in conclusion, I would like to share my concerns towards such features (in their current implementation):

- Lack of visibility. Tenable shows “Key drivers”, but it’s not enough to justify the need for patching. I would like to see a complete report showing the reason WHY the vulnerability is critical or may become critical with the links to security objects and events.

- It’s only for Enterprise-level products. Tenable doesn’t provide Predictive Prioritization in Nessus Professional. I certainly would like to see this as basic functionality in vulnerability scanners.

- Fixing only vulnerabilities that were chosen by AI might be dangerous. What if the vulnerability that was marked non-critical will be exploited by an attacker? Who will be responsible? (You)

- We need to go deeper. Prioritization that doesn’t distinguish types of assets and network structure can’t be effective. We need a good Asset Management first and the ability to predict possible attack scenarios and paths.

Hi! My name is Alexander and I am a Vulnerability Management specialist. You can read more about me here. Currently, the best way to follow me is my Telegram channel @avleonovcom. I update it more often than this site. If you haven’t used Telegram yet, give it a try. It’s great. You can discuss my posts or ask questions at @avleonovchat.

А всех русскоязычных я приглашаю в ещё один телеграмм канал @avleonovrus, первым делом теперь пишу туда.

Hey Alexander,

Great post’s by the way… can I put a simple question to you. If I was going to spend money on a tool, which vendor(s) should I consider. The usual 3 are on the list T, Q, R7, but which one would you spend money on right now.

And, secondly, would you trust any of them with OT, IoT devices? 😀

Pingback: CISO Forum 2019: Vulnerability Management, Red Teaming and a career in Information Security abroad | Alexander V. Leonov

Pingback: Vulristics: Beyond Microsoft Patch Tuesdays, Analyzing Arbitrary CVEs | Alexander V. Leonov

Pingback: Vulristics News: EPSS v3 Support, Integration into Cloud Advisor | Alexander V. Leonov

Pingback: Вышла третья итерация Exploit Prediction Scoring System (EPSS) | Александр В. Леонов

Pingback: 1 ноября официально вышел CVSS v4.0, который и так-то никому не нужен, а особенно он не нужен в России | Александр В. Леонов